Some time ago, I followed a discussion in a LinkedIn group for NPS professionals about asking two or more questions in NPS surveys. From our perspective we have many cases on why you shouldn’t or even should ask more questions, so I wanted to share these learnings and insights with the group and with anyone struggling with this.

There are four important arguments in favor of asking only two questions and our data backs it all up. Before diving into them, however, we have to look at the philosophy behind the Net Promotor Score or the Net Promotor System as it is increasingly referred to.

The whole point of why NPS was launched, is exactly to fight the long surveys and market research tools that were launched at customers. The team of Fred Reichheld researched this one magical question because they knew people were growing tired of long surveys. They stopped filling them out, they got frustrated with them and friction is exactly what you want to avoid if you want to be customer centric. It doesn't make sense to expand NPS with additional questions.

But hear me out, I'm not ruling anything out. Every decision either in favor or agains more questions needs to be taken with a knowledge strategy in mind.

So, with the NPS spirit and methodology in mind, in all of our client cases we find these 4 arguments to be true.

With each additional question, the response rate drops with approximately 10%. Knowing that response rates for two-question-surveys are on average between 15% and 45%, you just don’t want to lose any more percentages!

The whole purpose of NPS is to have a neutral way of surveying your customers about their experience with your brand and/or your staff. Losing this neutrality can cause your customers to start fretting about something they didn’t care about in the first place and / or they are distracted from what they actually wanted to tell you.

For example, an insurance company sends out a one-time survey on paper every year to all their customers. That survey contains the NPS question and the follow-up question of their NPS is: ‘what could we do better?’. Instead of allowing an open ended answer they give the answers themselves via multiple choice. First off: how nice that they already know the answers. Perhaps they should have worked on them already? But secondly suggesting ‘pay out sooner after a claim’, obviously triggers customers to think ‘hey, yes, why do you guys delay in paying out??’. Suddenly you’re giving customers a reason to not be satisfied, even if it wasn’t a reason to them BEFORE the survey.

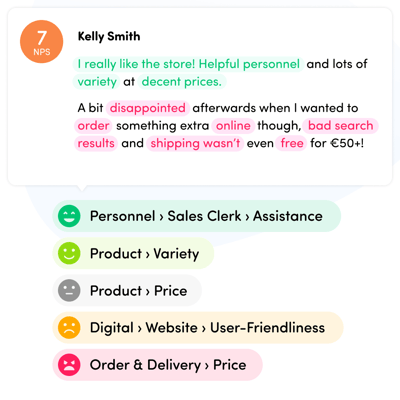

Not only can extra questions really influence how people think of you, it can also influence their response behaviour in the second question. We've seen many times that when adding a few extra questions results in the open feedback to be exclusively about those topics. You're fostering tunnel vision.

This relates to my second argument of course. If you draw up an NPS survey with multiple questions, these questions are drawn up from YOUR perspective. Stuff you think is important for customer experience. NPS is aimed at getting an outside perspective, your customers’ perspective. By adding multiple extra questions, you will get what you expect to find out.

We have a few customers that were running NPS long before they started using our service. In most cases NPS was combined with additional questions. What we tend to see from the results through our two-question campaigns is that the NPS are higher. NPS is an emotional scoring, very in the moment, which means that if you cause friction customers may give you a lower score than they intended to.

People suggesting having more questions is better, are right too, you know?

In the long run having additional campaigns with a small set of extra questions can help you get more ‘proven’ insights. Such a campaign may confirm (or not) what is indicated as your key drivers of (dis)satisfaction. However, these more-question-campaigns need to fit within a long-term NPS strategy.

Suppose you are running a continuous transactional NPS programme on a certain touchpoint and you have gathered a lot of insights as to what the drivers of your particular score may be. I am deliberately saying MAY be, because no manual analysis of open feedback nor automated feedback analysis can give a 100% certainty.

It may be worthwhile to create a separate NPS campaign, deviating x % of your customers toward that campaign after their interaction. In this separate campaign you add more questions. Mind you: more 0-10 scale questions, based on the trends you are seeing.

Let’s say in this hypothetical case you are a consumer bank and you are running a transactional NPS for the specific touchpoint ‘new customer sign-up in the bank agency’. You’ve been running this since two years and the analysis from the open data tells you that the two key drivers for your NPS are:

Now could be the time to double check this by creating an alternative campaign adding the following questions:

From the incoming data you can then try and correlate the scores of these questions to your NPS.

The beauty of this approach is that you can trial and error as long as needed. If no correlation is found for these drivers, it’s a signal to dive deeper in the feedback data and find other and perhaps hidden drivers. You can test many potential drivers via this method.

To conclude this article, I guess it all comes down to finding the right balance between not frustrating customers, having good quality feedback and aiming to prove certain findings.

Subscribe to the Hello Customer newsletter and receive the latest industry insights, interesting resources and other updates.